How I self-host with Ansible - Multi-server Container Deployments with Nomad

Essay - Published: 2025.12.19 | 8 min read (2,175 words)

ansible | create | nomad

DISCLOSURE: If you buy through affiliate links, I may earn a small commission. (disclosures)

I recently overhauled my hosting / deployment infrastructure as an incident followup to my site going down in 2025.11. Before that I'd moved from Coolify to Ansible and before that from serverless cloud hosting to my own infrastructure.

In this post we're going to walk through my current infra and why I chose it.

How I host my apps

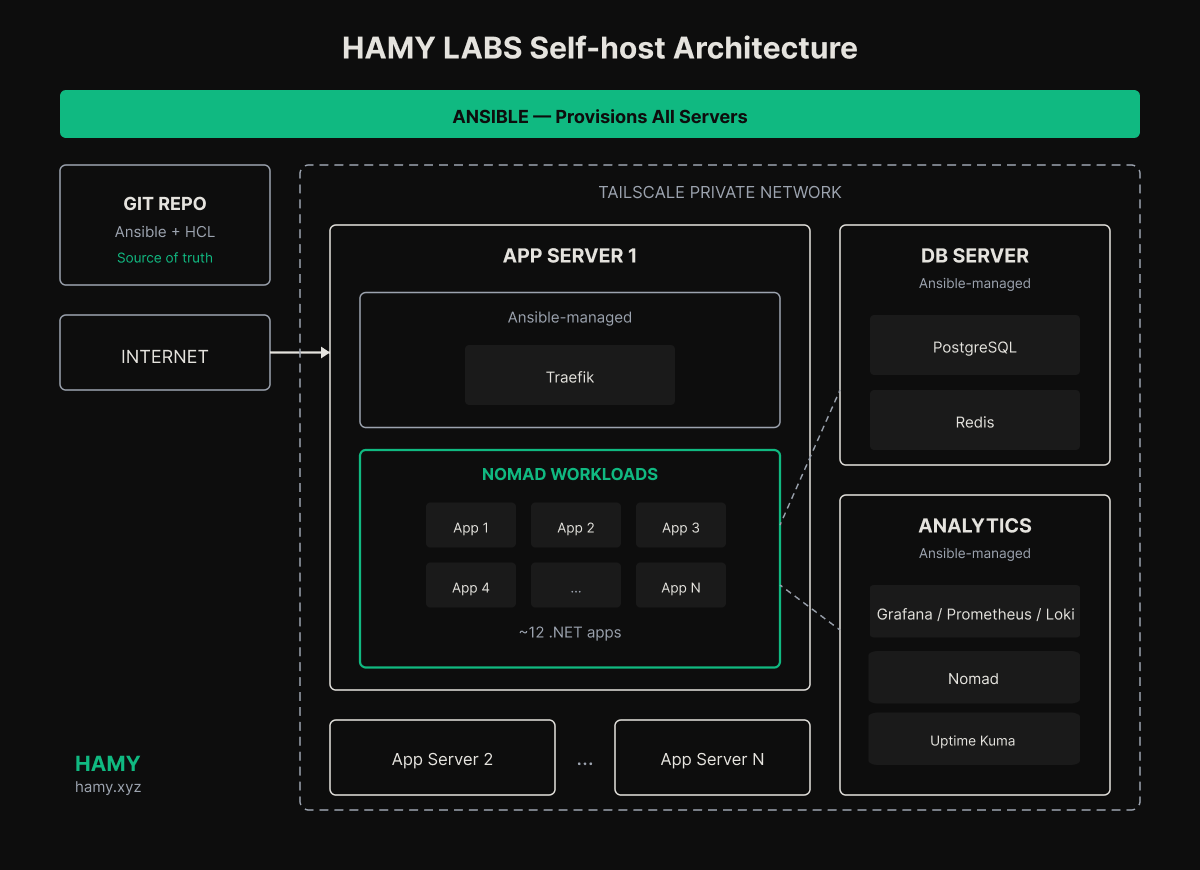

I have a 3 server (role) setup:

- Analytics server - Hosts my analytics, monitoring, and admin workloads like Grafana, Prometheus, Loki, Uptime Kuma, and Umami. Separated for fault tolerance.

- DB Server - Hosts my Postgres and Redis DBs. Separated for performance and fault tolerance.

- App Server(s) - Hosts all my apps. Currently just 1 server but can spin up to n. Uses Nomad for app deploys, rollouts, healthchecks, etc.

All servers are configured to be part of my Tailscale network which allows me to close most external ports on my servers while still allowing them to talk to each other. I also hook my personal computers up to the Tailscale network so they get privileged access to things like my analytics dashboards.

Why I use Ansible

When I first started self-hosting, I chose Coolify - an opensource PaaS. This allowed me to get the price:performance ratio of a VPS while allowing me to manage my servers and apps with a cloud-like UI I was used to.

Coolify worked great for a couple apps but I realized that each new app required ab the same amount of effort to setup. I had to dive through UI flows, set various environment variables, copy/paste my configurations over. It wasn't terrible, but it felt like a lot of manual effort and opened the door to copypasta issues. Worse, if Coolify ever went down I'd have no way of recovering the setups I had before which would make disaster recovery messy.

Coolify does support recover from backups but reliability is spotty and IME the server to server transfers are annoying so I often side stepped them by recreating the apps manually.

So I decided to move from Coolify to Ansible which gave me more control over my servers and all the config lived in code so I could easily reuse variables / configs across apps and map entirely new servers to host my infrastructure in a couple minutes. I've been able to prove this numerous times through my testing by spinning up new servers and flashing them with my setup.

How I use Ansible

I use Ansible to rollout my infrastructure in phases:

- Server Foundations - All Servers - Hardens OS, sets up auto updates, connects to Tailscale and locks down all other access, installs Docker to run my containers, and preinstalls monitoring daemons. All servers get the same base setup and then workload-specific tweaks are setup in the other playbooks.

- Core Services - Analytics + DB Servers - Creates the analytics and db servers, installs appropriate apps / configurations, and opens access for the machines / apps that need them.

- App Servers - Creates the app servers with environment variables pointing them to the appropriate analytics and db servers. Each app server is a Nomad client so workloads can be provisioned on them from the Nomad orchestrator.

Analytics Server

This is the monitoring / supervisor server. It coordinates things and gives me a window into the other servers. If there's one special server in my architecture it's this one. The others are workers could be scaled 1...n but this one would be tough to do so.

- Monitoring - Grafana, Prometheus, Loki. Probably overkill but I figured if I'm going to do the work, I might as well just get the full power of it. Each server is seeded with exporters to get server data / logs and each server role has exporters to get data / logs from their specific workloads. I also run Umami for web analytics.

Uptime Kuma was way easier to setup than I expected.

— Hamilton Greene 🐷🦔 (@SIRHAMY) November 26, 2025

Now have periodic healthchecks for my sites with notifications going out to a personal discord server. pic.twitter.com/hCj9SdnWmC

- Healthchecks - I installed Uptime Kuma as an incident followup to give me uptime monitoring across all my apps. It was super simple to install (literally just a docker container added via my Ansible script) and has been working well. Grafana should be able to do smth similar but I found Uptime Kuma was way simpler so just went with that. I have a personal Discord server I setup to send me auto notifications from my infra which has worked well and simpler than dealing w email smtp config.

- Orchestration - I decided to put the Nomad controller on my analytics server because it already serves as a kind of overview / admin server anyway. Nomad uses a primary / secondary model so made sense to have one primary and n secondaries which would be the app servers.

DB Server

The db server is where I host my databases. I chose to have it on its own server as I wanted to play around w multi-server paradigms and figured it would scale better - the apps and dbs won't fight for contention and I can scale them independently.

For my usecases this is WAY overkill but now I know how this stuff works and have peace of mind in case I accidentally create a smash hit app (in my dreams).

- Primary - Postgres is the primary because you should probably just use Postgres. Postgres is the GOAT - it does relational and non-relational well, it outperforms most other technologies, it has great tooling support, and it has tons of plugins to configure it to work better for specific usecases like time series or RAG or geo data. So all-in-all it's hard to go wrong with Postgres - especially for my scale.

- Secondary - Redis is the secondary for things like caching and, potentially, non durable queueing. None of my workloads really need caching but I figured I should just set it up to see how it works and because it's just an extra docker container to set up w my script.

- Pgbouncer - None of my apps will likely scale that high but I do expect to run many apps at the same time - I'm projecting ~50 in the next few years. So the bottleneck for this setup is likely connections, not performance. I looked around and it seems like pgbouncer is the de facto way to help manage connections - it basically works like a queueing system so an app can use Postgres as if it has all connections open but Postgres only uses a fraction of that amount cause pgbouncer is internally queueing the workloads and funneling them into the smaller number of connections (a connection pool). Again overkill for my setup but I feel better knowing this failure mode is covered (or has a pathway to being covered).

App Servers

The app servers are built to be stateless workers. They're all setup the same and they receive Docker containers to run. The orchestration bit is managed by Nomad and each app server is a "client" to the analytics server's "server". The Nomad server looks at the workloads it has and chooses how to run them on the clients depending on data around app usage and reserved resources.

Each server has a traefik container on it that understands the config mapping of all the urls and can query Nomad to figure out where to redirect the request to hit the appropriate workload. In a more "production" setup I'd probably use Consul for a lot of its capabilities like service discovery but it was simpler for me to just stick w Nomad.

Previously I was spinning up the Docker Composes on servers directly via Ansible and having auto deploys via periodic pulls with Watchtower but I ran into a couple issues with it that led me to seek out alternatives. Primarily it seemed like I'd be reinventing the wheel for many things like health checks, blue / green deploys, push-based deploys, resource reservations, and container packing / orchestration. Ansible makes this a lot easier to manage because it's all in code BUT I don't necessarily want to have to maintain all that stuff - the best code is the code never written.

After researching other options Nomad seemed the most interesting because it stuck with IaC, has a lot of nice integrations / built-ins, is supported by a large co (Hashicorp for better or worse), and can scale to enterprise scale all without the "complexity" of a full-blown k8s. Only time will tell if this was a good decision or not but it seems to hit all the boxes I was looking for.

FAQ

Why multi-server?

Way overkill but I wanted to try out multi-server and figured if I ever needed to scale - due to so many apps or users - I'd need to figure this out anyway. IaC makes multi-server configurations a lot easier (and maybe even feasible) so felt like a good time to make the leap.

DBs are typically the bottleneck in most apps so moving it to its own server is useful so it doesn't fight with apps for resources. Of course if an app gets super big then I'd probably move it and its db to separate servers but with IaC I've already got a blueprint for how that would work.

Why use Nomad vs k8s, Komodo, Coolify, Docker Swarm, Kamal, etc?

There are a lot of different options out there for self hosting and they run the gamut from super flexible to opinionated and DIY to off the shelf.

- PaaS - Komodo and Coolify offer good off-the-shelf, fully-featured PaaS that you can self host yourself (or use a managed option). These are great and where I'd recommend most people start because you get the UI of the cloud with the price and performance of your own servers. But for me they didn't offer enough IaC which I think is necessary when you are trying to manage dozens of apps across multiple servers.

- Simplified IaC - Kamal, Docker Swarm - These are good and if you're running apps on their own servers alongside their DB I think these are great, simple IaC options. It gets complicated when you're doing multiple apps with shared infra like dbs and monitoring so I felt they didn't add much vs my existing Ansible and docker compose setup.

- K8s - K8s is the enterprise option. It's got everything but you also need to understand everything. Basically everything I read said K8s can do it but you should consider every other option first cause once you're in there it gets complicated fast. I just wasn't sure I wanted to go down the k8s route yet though if Nomad fails me, k8s might be my next choice.

Nomad seemed to fit in a gap where it was simpler than k8s but brought with it a lot of the common things I wanted - service discovery, orchestration, routing, health checks, blue / green deploys, resource reservations, etc. So I decided to try it and got smth working in a couple hours which was a good sign.

Why self-host vs cloud?

Cloud is expensive and has a lot of vendor-specific cruft associated with it like iam users, UI-based configurations, navigating how the various services connect w each other etc.

Self-hosting gives me more flexibility and control which lets me run my apps cheaper and how I want. Of course it takes a lot more time in setting it up and potentially ongoing maintenance so there's a tradeoff.

I was radicalized when I actually did comparisons for price:performance while building CloudCompare - self hosting can often save you 10-100x over cloud for similar offerings.

Next

Anyway that's how I'm currently running my setup. It probably will change in the future but for now I'm pretty happy with it and think it would work for me for the next couple years.

Let me know if you have questions or would like to see code / examples. I've got a lot I could clean up and share but not sure if anyone's interested and in what parts.

Get $20 off Hetzner Cloud: If you're curious ab self hosting and want to get some cheap, quality VPS - I like and use Hetzner and you can get $20 off Hetzner cloud when you use my link.

If you liked this post you might also like:

Want more like this?

The best way to support my work is to like / comment / share this post on your favorite socials.