Improving F# / Giraffe web benchmarks by 6.56x

Essay - Published: 2022.12.14 | 4 min read (1,147 words)

benchmarks | fsharp | giraffe | performance

DISCLOSURE: If you buy through affiliate links, I may earn a small commission. (disclosures)

In this post we'll dive into some small changes that resulted in a 6.56x improvement in requests / second benchmarks for a web server written in F# / Giraffe. Along the way, we'll touch on the nature of benchmarks, how the specific changes work, and end with some takeaways that may help you in your next peformance bottleneck.

Web Benchmarks

Web benchmarks are efforts to simulate and measure the performance of different technologies under typical usage patterns. This data is often used to choose between technologies, find bottlenecks, and validate solutions.

Some popular web benchmarks include:

But before we continue, there are some caveats we should understand about benchmarks - namely that accurately and fairly evaluating any two unlike things is hard. Some challenges:

- It's hard to accurately simulate "normal" web traffic

- "normal" web traffic patterns can vary widely across different usecases / organizations

- There are large incentives to game benchmarks, making benchmarked code diverge from "real" code

And likely a whole lot more. A full dive into these caveats is outside the scope of this post but I'd highly recommend How fast is ASP.NET Core? if you'd like to read more.

Interesting read on some dark patterns used to spoof techempower web framework benchmarks: https://t.co/8iDsMr4TRO

— Hamilton Greene (@SIRHAMY) November 26, 2022

If we look at just "Full" benchmarks we find #fsharp #giraffe in a respectable 21st - https://t.co/RagNLcyF3a pic.twitter.com/3t0NuiPECk

F# + Giraffe Benchmarks

F# on a Giraffe web server is currently my choice for building simple, performant backends. I like it because it combines the ergonomics of F# with the power of .NET.

For an example of an F# / Giraffe web server, see: Build a simple F# web API with Giraffe

Personally I use benchmarks as a smoke test for things to avoid. I don't need the fastest thing on the market (there are other principles / values that matter more to me like devx and support) but it should at least be average. If it's not at least average, that's a red flag to me that this thing may not scale and that it may not have good support (if there was support - benchmarks / perf would've been fixed).

Giraffe had the ergonomics but it also typically scored quite well in benchmarks - landing near the top of both F# and C# pools (upper middle of most other technologies).

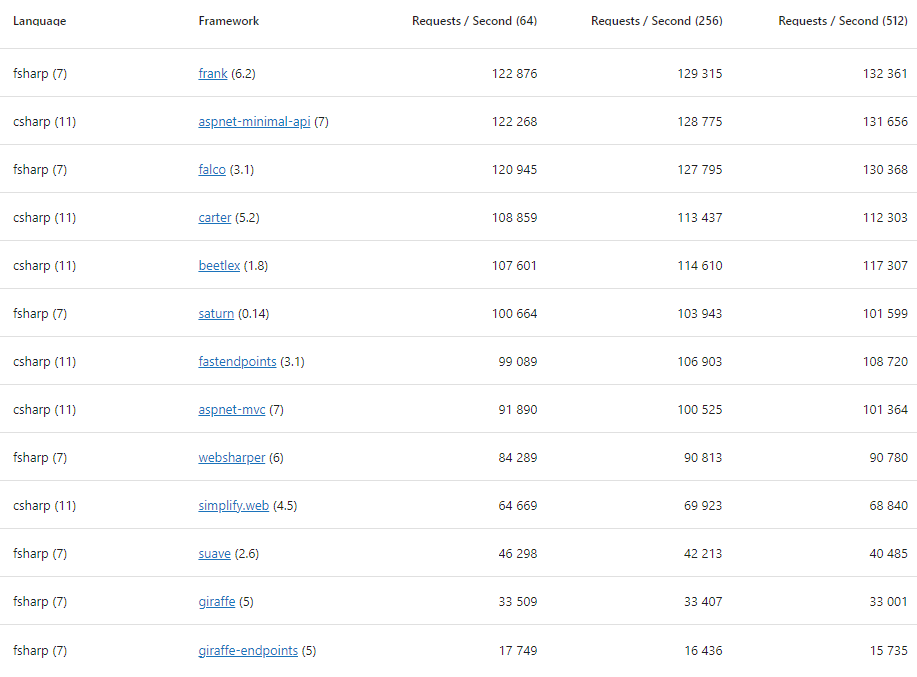

A few weeks ago I noticed that one benchmark was showing Giraffe as super slow - near the bottom of the F# / C# pools. What was weird is that Saturn - an F# web framework built on top of Giraffe - was beating it by ~3x.

- Saturn - 100,664 requests / second

- Giraffe - 33,509 requests / second

- Giraffe-endpoints - 17,749 requests / second

This goes against expectations as typically in technology to build on top of something necessitates building up abstractions around it in order to incorporate it into a new whole. These abstractions introduce overhead which typically leads to more slowness when directly measuring the original component's performance at its original job.

So in this case we'd likely expect Giraffe and Saturn to be about the same, more likely that Giraffe was faster than Saturn.

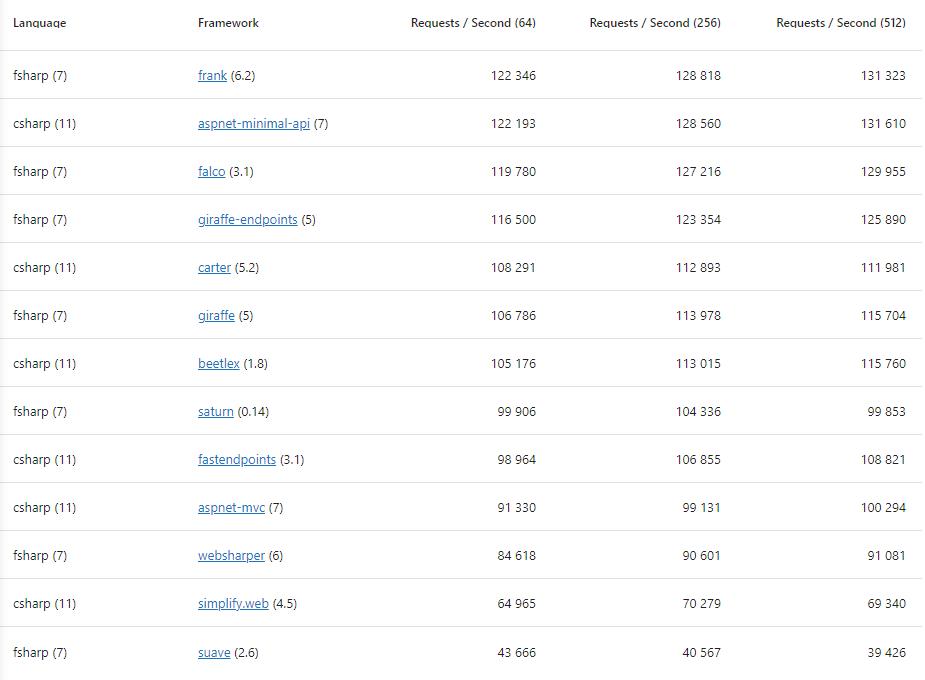

So I went digging and eventually came up with this PR to fix, resulting in:

open Microsoft.AspNetCore.Builder

open Microsoft.AspNetCore.Hosting

open Microsoft.Extensions.DependencyInjection

open Microsoft.Extensions.Hosting

open Microsoft.Extensions.Logging

open Giraffe

open Giraffe.EndpointRouting

// Web app

let webApp =

[ GET [ routef "/user/%s" text

route "/" (text "") ]

POST [ route "/user" (text "") ] ]

// Config and Main

let configureApp (app: IApplicationBuilder) =

app

.UseRouting()

.UseEndpoints(fun e -> e.MapGiraffeEndpoints(webApp))

|> ignore

let configureLogging (log : ILoggingBuilder) =

log.ClearProviders()

|> ignore

let configureServices (services: IServiceCollection) = services.AddRouting() |> ignore

let args = System.Environment.GetCommandLineArgs()

Host

.CreateDefaultBuilder(args)

.ConfigureWebHost(fun webHost ->

webHost

.UseKestrel()

.ConfigureLogging(configureLogging)

.ConfigureServices(configureServices)

.Configure(configureApp)

|> ignore)

.Build()

.Run()

The big change was configureLogging where we essentially remove the default systems that log. I/O is often a bottleneck in systems so it makes sense that removing this whole class of work speeds up the benchmarks. I just didn't realize by how much.

- Giraffe-endpoints - 116,500 requests / second (was 17,749 requests / second - 6.56x)

- Giraffe - 106,765 requests / second (was 33,509 requests / second - 3.19x)

- Saturn - 99,906 requests / second (was 100,664 requests / second - -0.8%)

Performance Takeaways

So what can we take away from this whole situation? I think a lot of things - with an asterisk.

Use benchmarks (with caution)

Benchmarks are useful tools for understanding how your technology actually performs in the wild. That said, it's important to keep in mind that it's hard to create realistic benchmarks and bad data can lead you astray.

If you use them directionally rather than literally you can typically get useful signal out of them.

Beware your logging

While you probably couldn't (and shouldn't) just turn off logging for a 6.5x performance improvement, I think it's likely most systems could tune their logging and see substantial improvements in performance.

Whether or not this is worth it for you likely depends on whether it's a bottleneck or not but checking in on this from time to time seems like a good idea.

In .NET land a simple thing to check is that your Logging Level is set and used appropriately throughout your app.

Different speeds matter differently

Depending on the scenario, the importance of different speeds varies drastically. For a planet scale company like Facebook - squeezing every last drop of performance out of their programming language, web servers, and data centers means $Bs. But for most orgs, horizontal scaling works which means performance is not the bottleneck.

Instead, most of the time the most important kind of speed is in making good decisions, testing ideas out in the marketplace, and capitalizing on strong positions. These kinds of problems benefit more from speed of implementation, good data / visibility into your product, and reliable systems than a few thousand request / second.

Think about what's most impactful for your org and keep the main thing the main thing.

Next Steps

Thanks for reading! I have a few more ideas for how to further improve these benchmarks without #cheating so will report back based on results.

If you're curious about getting started with ergonomic, efficient web services in F# check out these resources:

Want more like this?

The best way to support my work is to like / comment / share this post on your favorite socials.

Inbound Links

- Your Programming Language Benchmark is Wrong

- F# vs TypeScript - Sorting Performance (Round 2)

- F# vs TypeScript performance - Sorting 1 million elements

- How fast can we sort 40k elements in TypeScript?

- Is C# faster than F#?

- [Release][CloudSeed]: Faster, simpler routing with F# / Giraffe

- Top F# Backend Web Frameworks in 2023

- F# / Giraffe Web APIs - 1% faster