F# / Giraffe Web APIs - 1% faster

Essay - Published: 2022.12.28 | 3 min read (924 words)

fsharp | giraffe | performance

DISCLOSURE: If you buy through affiliate links, I may earn a small commission. (disclosures)

In this post we'll dive into a tiny code optimization that led to a 0.98% improvement in F# / Giraffe's web benchmarks measuring Requests / Second. We'll discuss what the change is as well as why you should probably apply it to your own web servers in production.

F# / Giraffe

First let's make sure we're on the same page with respect to the technologies we'll be talking about.

Giraffe is a popular web framework for F# that provides a light-weight, functional interface on top of the performant, flexible ASP.NET web framework sponsored by Microsoft. I really love this combination and has been my choice for building web APIs for the past several months.

Going into the details of this web server paradigm is beyond the scope of this post but if you're interested in learning more you can read:

Benchmarks (*)

Now that we know the specific technologies we're talking about, we can move onto benchmarks. It's important to note that the performance improvements I'm claiming were measured via benchmarks and all benchmarks have asterisks.

These asterisks typically spawn from one core challenge:

It's hard to make consistent, authentic simulations of the real world (and there are many incentives against doing so).

Diving into this is, again, out of the scope of this post but it's sufficient to know that benchmarks are useful but all have their own asterisks.

For a more in-depth dive into benchmark dark patterns, see: Improving F# / Giraffe web benchmarks by 6.56x

The benchmarks we're focused on here are The Benchmarker - Web Benchmarks which aim to measure the general Request / Second and latency of various web frameworks.

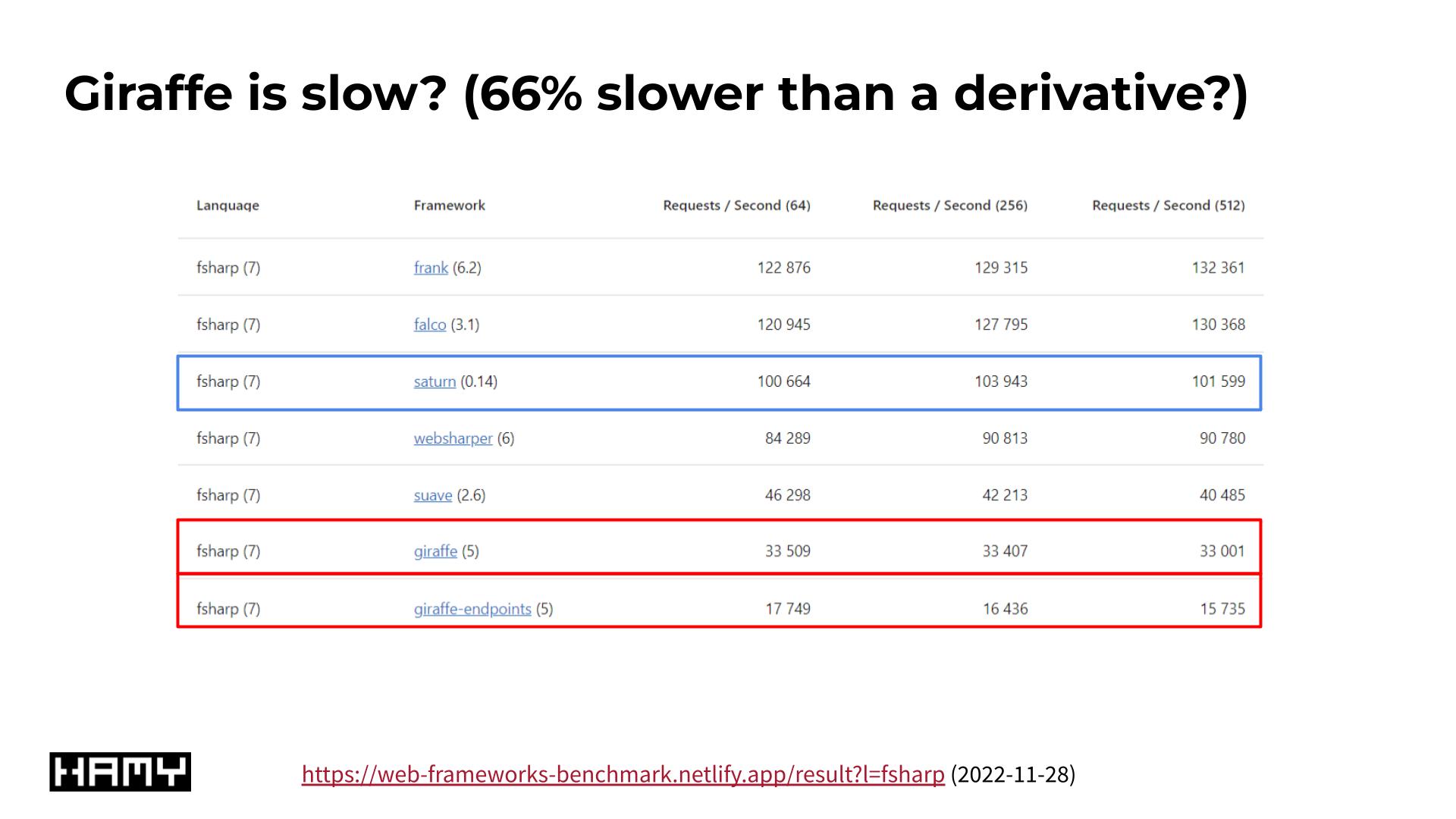

Poor benchmark results for F# / Giraffe

In my previous investigation into F# / Giraffe's benchmark performance, I fixed inconsistencies in benchmark implementation resulting in 6.56x improvements in performance. The fix was to remove unnecessary I/O that was being skipped by other frameworks - leveling the playing field and vastly improving results.

While I was investigating these inconsistencies, I also noticed a few other opportunities that could be tried - which is what brings us here today.

The 1% fix

The new 0.98% fix is very similar to the previous one - we're removing unnecessary operations that occur on every web request. For trivial requests (like the ones in benchmarks) even tiny bits of overhead can lead to large decreases in performance as shown in my last performance investigation.

The change is to configure Kestrel (ASP.NET's default web server) to not add its default ServerHeader to every request (see: GitHub PR)

Program.fs (before change)

...

Host

.CreateDefaultBuilder(args)

.ConfigureWebHost(fun webHost ->

webHost

.UseKestrel()

.ConfigureLogging(configureLogging)

.ConfigureServices(configureServices)

.Configure(configureApp)

|> ignore)

.Build()

.Run()

Program.fs (after change)

...

Host

.CreateDefaultBuilder(args)

.ConfigureWebHost(fun webHost ->

webHost

.UseKestrel(fun c -> c.AddServerHeader <- false)

.ConfigureLogging(configureLogging)

.ConfigureServices(configureServices)

.Configure(configureApp)

|> ignore)

.Build()

.Run()

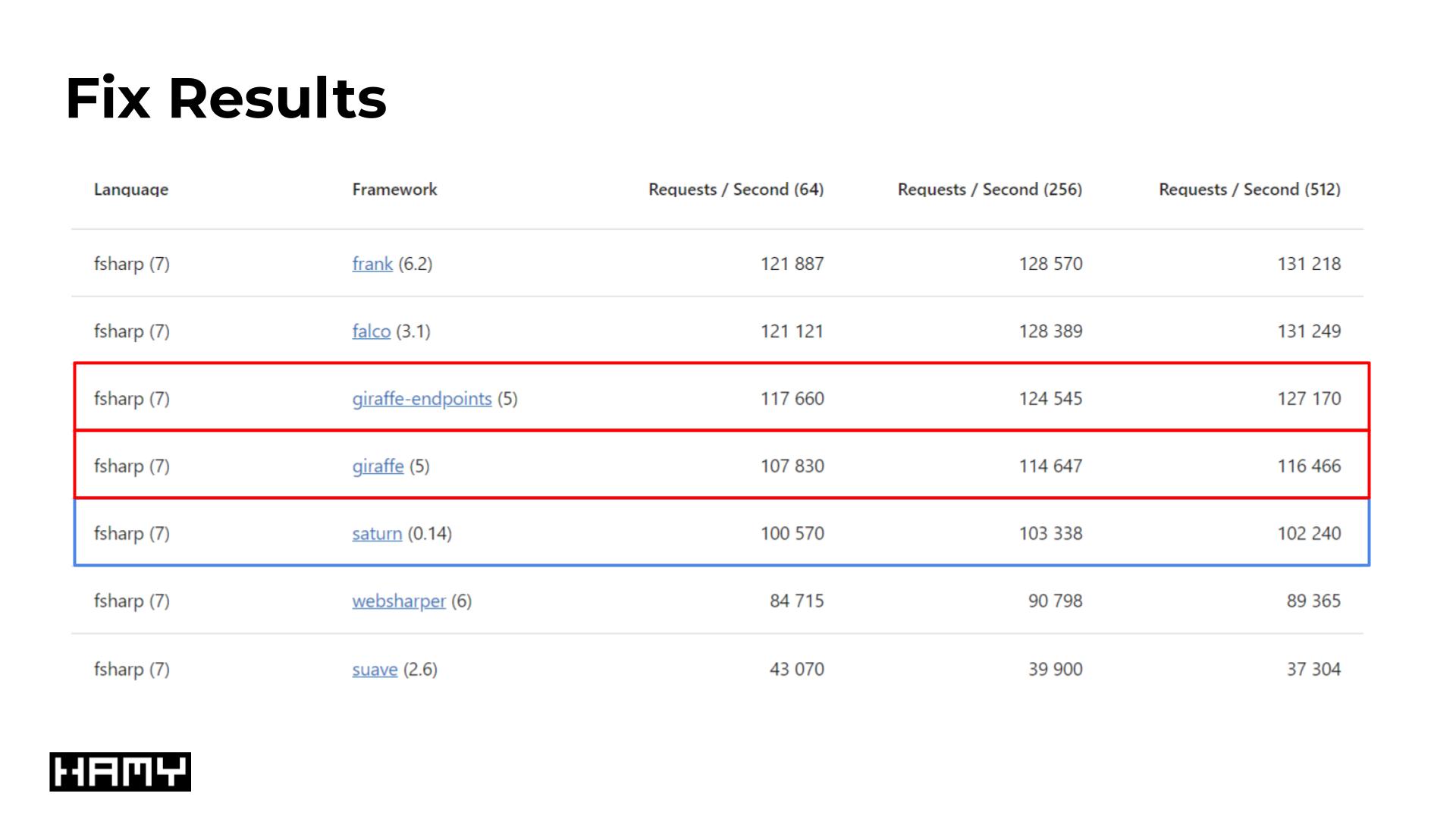

Benchmark Fix Results

Upon making this change, we see a 0.98% - 1.00% improvement in Requests / Second benchmarks for Giraffe and Giraffe-endpoints benchmarks respectively:

- Giraffe

- Before (2022.12.12) - 106786

- After (2022.12.17) - 107830

- Delta: 1044 (0.98%)

- Giraffe Endpoints

- Before (2022.12.12) - 116500

- After (2022.12.17) - 117660

- Delta: 1160 (1.00%)

Numbers represent Requests / Second benchmarks

What this does:

Kestrel is ASP.NET's default web server. It is fast and cross-platform and gets very good community / corporate support. So it's a reasonable default.

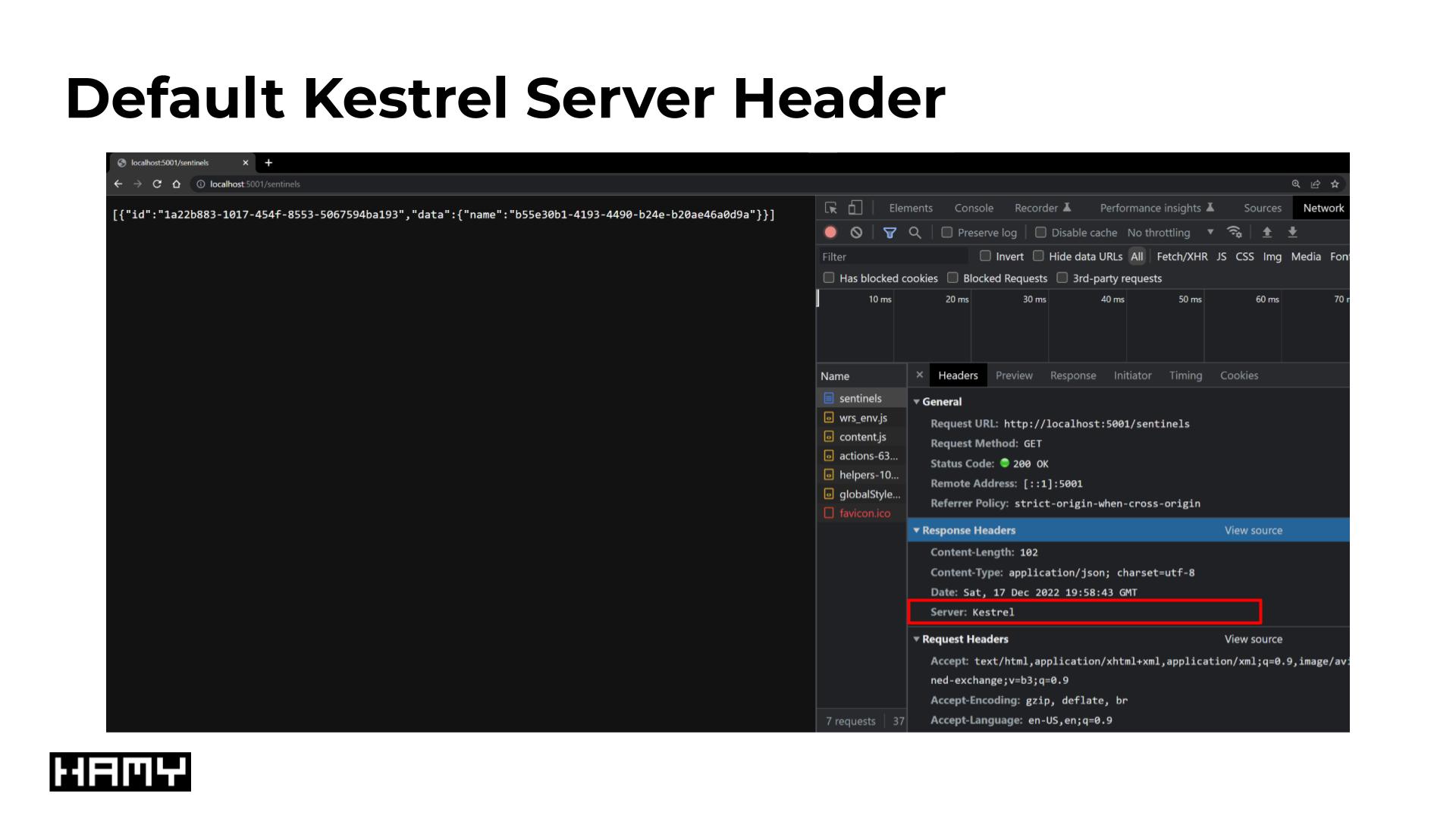

Default Kestrel Server Header

What's a more questionable default is that it adds a Server header by default which contains information about what kind of server it is and what it's running. This is questionable for a few reasons, mostly:

- Performance - unnecessary overhead / payload

- Security - the more info outsiders have, the more likely they can leverage it for attacks

These are relatively minor downsides but it's hard to think of a big upside to having this header. The only one I've been able to find so far is that IIS seems to require this for routing - though this seems more like artificial vendor lock-in than a useful feature.

References that mention this is a poor security choice include: Gigi Labs, Karthik Tech Blog, and C# Corner.

Why you should do this too:

Ultimately this isn't going to be a huge win - 1.00% is the max you could expect in a hermetic, trivial usecase where we only measure a web server's overhead. In real usecases doing real work, the web server's overhead will be dwarfed by that real work - likely by many multiples.

That said, it's hard to see a downside to this change (unless you're stuck on IIS in which case I'm sorry for you).

- Performance - it reduces overhead present on every single request

- Security - it leaks less info to the internet (a large, scary place)

This seems like a pretty obvious win-win to me.

Next Steps

I'm off to port this optimization to CloudSeed - the F# / SvelteKit project boilerplate I use to jumpstart all my projects. If you're looking to start your own fullstack project, consider CloudSeed which includes many of the best practices and optimizations I've found while building and launching my own projects.

Want more like this?

The best way to support my work is to like / comment / share this post on your favorite socials.